GuideQ

Framework for Guided Questioning in Information Collection

Abstract

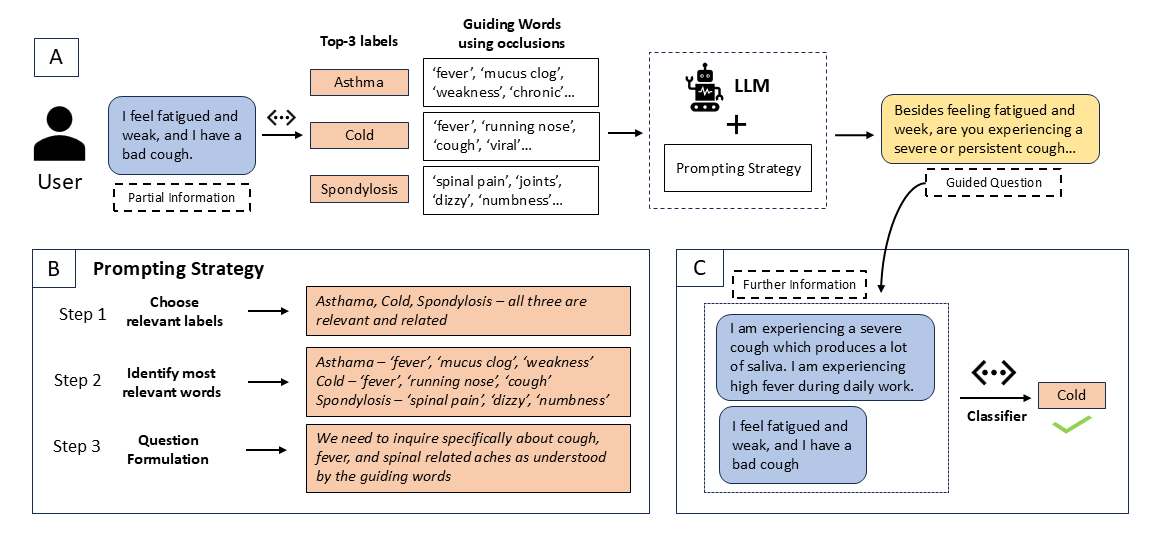

Question Answering (QA) is an important part of tasks like text classification through information gathering. These are finding increasing use in sectors like healthcare, customer support, legal services, etc., to collect and classify responses into actionable categories. LLMs, although can support QA systems, they face a significant challenge of insufficient or missing information for classification. Although LLMs excel in reasoning, the models rely on their parametric knowledge to answer. However, questioning the user requires domain-specific information aiding to collect accurate information. Our work, GUIDEQ, presents a novel framework for asking guided questions to further progress a partial information. We leverage the explainability derived from the classifier model for along with LLMs for asking guided questions to further enhance the information. This further information helps in more accurate classification of a text. GUIDEQ derives the most significant key-words representative of a label using occlusions. We develop GUIDEQ’s prompting strategy for guided questions based on the top-3 classifier label outputs and the significant words, to seek specific and relevant information, and classify in a targeted manner. Through our experimental results, we demonstrate that GUIDEQ outperforms other LLM-based baselines, yielding improved F1-Score through the accurate collection of relevant further information. We perform various analytical studies and also report better question quality compared to our method.

GuideQ introduces a novel framework for progressive information collection through guided questioning, helping to classify user responses more accurately by identifying and requesting missing critical information.

Key Components

- Classification Model: Uses BERT/DeBERTa to map inputs to labels

- Keyword Learning: Identifies significant words per label using occlusion techniques

- Question Generation: Leverages LLaMA-3 8B model to generate targeted questions

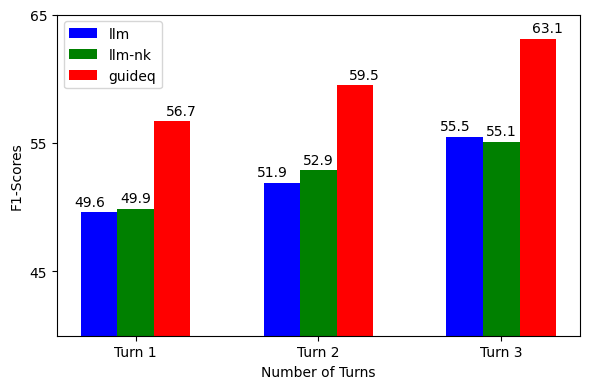

Results & Analysis

Classification Performance

| Task | Model | Precision | Recall | F1 |

|---|---|---|---|---|

| CNEWS | GuideQ | 0.603 | 0.495 | 49.6 |

| DBP | GuideQ | 0.932 | 0.912 | 91.3 |

| S2D | GuideQ | 0.793 | 0.806 | 86.8 |

| SALAD | GuideQ | 0.627 | 0.591 | 58.7 |

Technical Highlights

- Uses LLaMA-3 8B for question generation

- Leverages occlusion-based keyword extraction

- Combines top-3 predicted labels with keyword information

- Supports multi-turn interactions for progressive information gathering